AI is changing what disaster recovery has to cover, and how MSPs can deliver it.

On the threat side, attackers are moving faster, automating reconnaissance, and turning stolen data into targeted extortion. This is compressing the window MSPs have to respond.

The bigger shift for MSPs is everything else AI changes about the job. Clients are layering AI workflows, agents, and connected SaaS into daily operations faster than recovery plans can keep up. At the same time, AI is giving MSPs new ways to manage recovery itself: earlier risk detection, automated backup validation, smarter restore point selection, and evidence-backed testing that used to take days of manual work.

This new landscape comes with risk, but also with clear opportunities. The MSPs that adapt fastest can move disaster recovery out of the commodity backup conversation and into a higher-margin cyber resilience offering.

This article covers three questions every MSP should be working through right now:

- What new risks does AI introduce to disaster recovery?

- Where can AI actually help recovery?

- How can MSPs turn this into a higher-margin service?

AI Is Compressing the Recovery Window

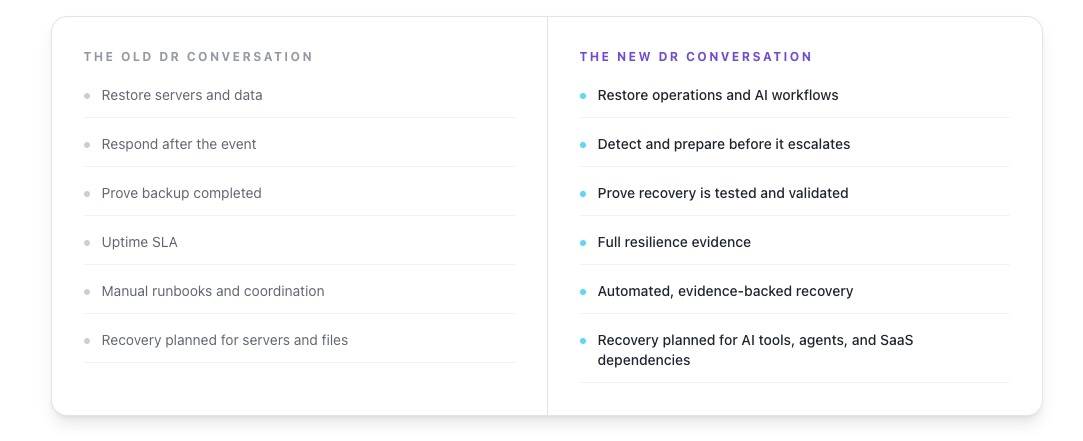

The old model assumed there would be time to gather the team, review the runbook, assign tasks, and coordinate recovery.

That assumption is breaking down.

Recent industry research shows ransomware breakout time, the window between initial access and lateral movement, has dropped from 48 minutes in 2024 to 18 minutes in mid-2025 (1). AI-assisted attackers are using automation to scan environments, escalate privileges, and exfiltrate data faster than most manual recovery processes can keep up with.

A few patterns are accelerating this trend:

- AI-generated phishing is more convincing and easier to localize across languages

- Low-skill attackers can produce functional malware by iterating with AI models

- Reconnaissance and credential theft are increasingly automated

- Encryption now happens in hours, not days, in some incidents

For MSPs, the practical takeaway is straightforward. A recovery strategy that depends on stale documentation, manual coordination, and humans making decisions under pressure is not built for machine-speed attacks.

That does not mean every client needs an enterprise-grade RTO. It does mean recovery plans need earlier anomaly detection, prebuilt workflows, validated restore points, and tested failover procedures. The minutes spent figuring out what to recover are minutes the business is already losing.

Backups Do Not Guarantee Operations Resume

Backups confirm data exists. Backup recovery restores that data. Neither one guarantees the business keeps running if the data is corrupted.

AI-powered extortion raises the question: what damage has been done with the data that is left?

Modern backup practices have been working. Immutable storage, air-gapped repositories, and faster restore tooling have made it harder for attackers to monetize encryption alone. Many organizations can now restore quickly enough that paying a ransom for a decryption key looks unnecessary.

Attackers have adapted.

The current ransomware model is shifting toward exfiltration combined with AI-driven analysis. Large language models can process hundreds of gigabytes of stolen emails, chat logs, contracts, and internal documents in minutes. Attackers use that analysis to identify financial pressure points, regulatory exposure, embarrassing communications, or specific individuals to threaten (2). The extortion message is no longer generic. It is targeted, specific, and harder to dismiss.

This is where the old MSP model breaks down.

“We have backups” no longer covers the full risk. Backups restore availability. They do not erase stolen data, undo reputational damage, satisfy regulators, or quiet a customer base that just learned their information was leaked. A client who recovers operations in two hours can still spend the next twelve months managing the consequences of a data exposure event.

For MSPs, that has two implications. First, the disaster recovery conversation needs to expand beyond uptime to include data exposure, identity recovery, and incident communication. Second, services that provide evidence of preparation, including immutable backup, isolated recovery environments, and identity controls, become easier to sell on something other than fear.

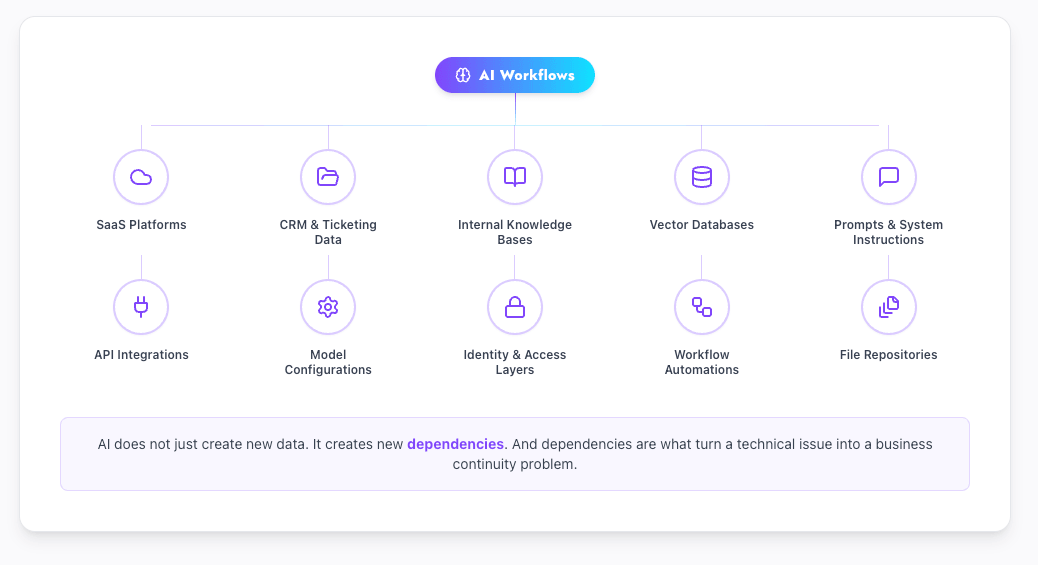

AI Is Creating New Continuity Dependencies Clients Do Not Fully Understand

This is where things get uncomfortable for many MSPs, because most clients are already further down this path than their recovery plans reflect.

Clients are adding AI tools, copilots, automation workflows, and AI-connected SaaS into daily operations. Few of them are asking the questions that would matter during an outage:

- Where does the AI tool store data?

- Which SaaS systems is it connected to?

- What permissions do AI agents and service accounts have?

- Are prompts, outputs, configurations, vector databases, and workflow automations recoverable?

- What happens if an AI workflow becomes business-critical and then breaks?

- What happens if an AI agent corrupts data across connected systems?

AI does not just create new data. It creates new dependencies. And dependencies are what turn a technical issue into a business continuity problem.

A practical example. A client builds a sales workflow that pulls CRM data into an AI assistant, summarizes prospect notes, drafts follow-ups, and writes back to the CRM. If the integration breaks, the credentials rotate, the prompt logic gets corrupted, or the connected SaaS data gets restored to an older state, the entire workflow may stop functioning. The CRM data may be intact. The business process is not.

Most disaster recovery plans do not account for this. They focus on servers, file shares, and core applications. They do not document AI tools, model configurations, identity dependencies, or the SaaS data those workflows depend on.

That gap is exactly the kind of strategic conversation MSPs are well positioned to lead. An AI continuity assessment, even a lightweight one, can surface dependencies the client has not mapped. It also creates a natural follow-on for protection, monitoring, and recovery work.

The DR Stack Itself Is Becoming an Attack Surface

Using AI inside disaster recovery tools is increasingly common. AI is being used to summarize tickets, classify alerts, recommend recovery steps, identify clean restore points, and even trigger automated workflows.

That capability comes with new categories of risk. Three are worth flagging directly.

Data Poisoning Can Corrupt Recovery Decisions

If an AI system is trained on telemetry, logs, or backup metadata that has been manipulated, its outputs can be quietly wrong. A poisoned model might learn to ignore signs of a specific ransomware variant or misclassify a compromised restore point as safe (3). The system still appears to be working. The decisions it produces are flawed.

Prompt Injection Can Hijack AI-Connected Tools

If an AI assistant can read tickets, documents, calendar invites, logs, or emails, malicious instructions hidden inside those inputs can influence its behavior. This becomes a disaster recovery problem when the AI has permission to trigger workflows, access backup data, summarize sensitive information, or recommend recovery actions. A malicious instruction embedded in a ticket comment is a different category of risk than a malicious file attachment.

Over-Permissioned AI Agents Become Insider Risk

The risk is not that AI gives a bad answer. The risk is that an over-permissioned AI agent takes a bad action.

Service accounts, API tokens, automation scripts, and AI agents all need identity governance. That includes least privilege, step-up authentication for sensitive recovery actions, human approval for destructive operations, logging of AI-initiated actions, and clear separation between systems that recommend and systems that execute.

If AI is going to be part of the recovery process, the AI itself needs to be governed. Otherwise, the thing helping the MSP recover becomes part of the failure.

Where AI Can Actually Improve Disaster Recovery

The risks are real. So is the upside, when AI is applied with discipline.

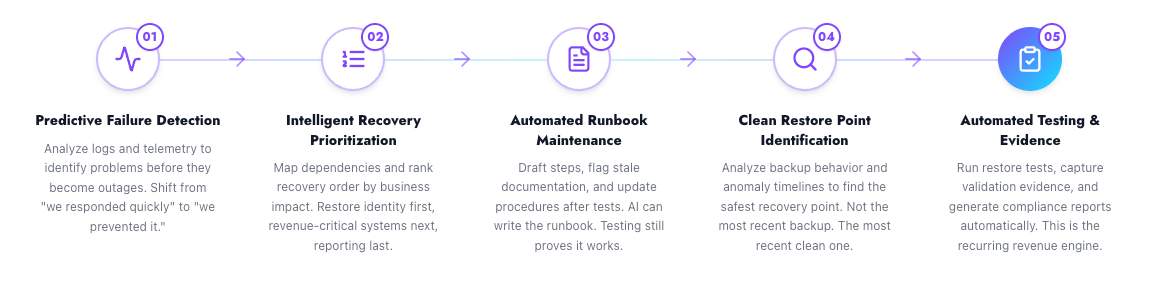

Predictive Failure Detection

AI can analyze logs, telemetry, system performance, storage behavior, and backup job trends to identify problems earlier than threshold-based alerts. Subtle deviations that traditional monitoring misses, such as gradual storage performance changes, abnormal authentication patterns, or backup job behavior drift, can be flagged before they become outages.

For MSPs, this changes the conversation from “we responded quickly” to “we prevented the outage.” That is a more defensible value statement and an easier one to charge for.

Intelligent Recovery Prioritization

During an outage, every department thinks its system is the most important. AI can help map dependencies and rank recovery order based on business impact and technical sequencing.

For example:

- restore identity and authentication first because most other systems depend on it

- restore payment systems before internal reporting

- prioritize systems tied to revenue, patient care, legal obligations, or customer communication

- avoid restoring an application before its database, DNS, or storage dependency is ready

This is not a replacement for runbook design. It is a way to keep the right order during a high-pressure event when humans are tired and time is short.

Automated Runbook Maintenance

AI can help draft recovery steps, summarize test results, identify missing dependencies, flag conflicts between the runbook and real infrastructure, and turn post-test lessons into revised procedures.

The honest limit here matters. AI can write a runbook. It cannot prove the runbook works. That still requires testing.

Clean Restore Point Identification

This is one of the most useful applications for ransomware response. AI can analyze backup behavior, file change patterns, encryption signatures, and anomaly timelines to help identify the safest recovery point.

The shift in question is the key part. Instead of asking, “Which backup completed most recently?” the better question becomes, “Which backup is the most recent clean point we can trust?” That distinction is the difference between restoring into a reinfection and restoring into a working environment.

Automated Recovery Testing and Evidence

This is the recurring revenue engine.

AI can automate restore testing, capture screenshot or video evidence, run validation checks, generate executive summaries, produce compliance reports, and track variance against RTO and RPO commitments. The output is not just a restored system. It is documented proof that the recovery worked.

Clients trust what they can see demonstrated. Insurers, auditors, and boards do too.

The MSP Margin Opportunity

AI-driven disaster recovery is not just a technical upgrade. It is a service delivery upgrade.

The MSP business model is under pressure. Client expectations are rising. Skilled labor is hard to hire. Basic managed services are increasingly commoditized. AI changes the math by reducing manual labor across exactly the activities that have historically made disaster recovery hard to deliver profitably:

- backup monitoring

- failed job investigation

- ticket triage

- client reporting

- runbook updates

- compliance documentation

- recovery test summaries

- anomaly investigation

- restore point analysis

Recent MSP-focused industry research has found that AI triage and routine task automation can reduce operational costs by 25 to 40 percent and meaningfully reduce human-handled ticket volume (4). That kind of efficiency unlocks the part of the business model MSPs actually want: technicians focused on higher-value strategic work, not chasing failed backup jobs.

The margin opportunity is not “AI-powered backup.” It is turning recovery readiness into a repeatable service that can be tested, reported, and renewed.

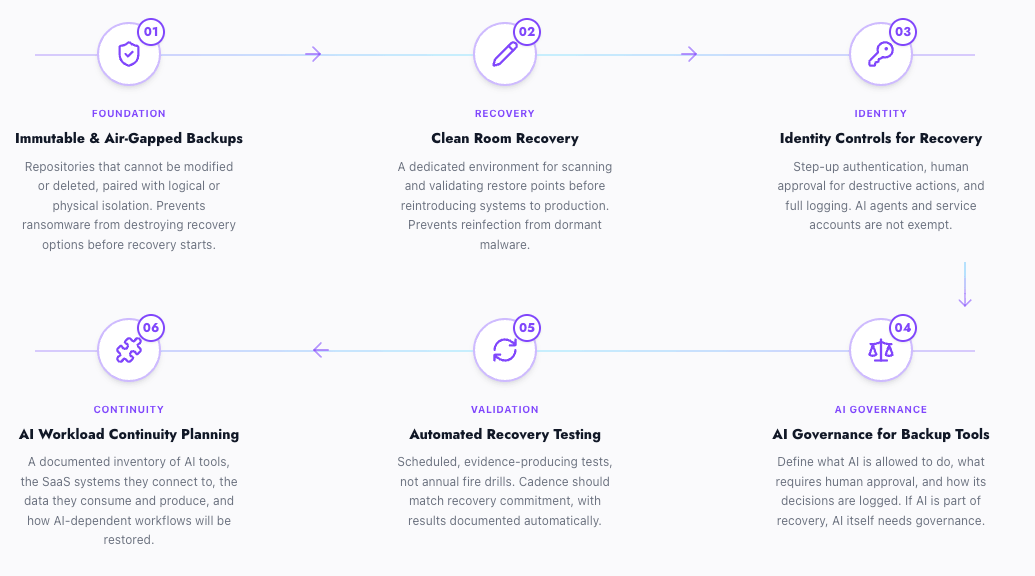

What MSPs Should Add to Their DR Offering Now

If the threat model has changed, the offering needs to change with it. Six elements should be on the roadmap.

Immutable and Air-gapped Backups

Backup repositories that cannot be modified or deleted, paired with logical or physical isolation, prevent ransomware from destroying recovery options before recovery starts.

Clean Room Recovery

A dedicated environment for scanning and validating restore points before reintroducing systems to production prevents reinfection from dormant or polymorphic malware.

Identity Controls for Recovery Actions

Step-up authentication, human approval for destructive actions, and logging of every recovery operation. AI agents and service accounts should not be exempt from this.

AI Governance for Backup and Recovery Tools

If AI is making recommendations or triggering actions, define what it is allowed to do, what requires human approval, and how its decisions are logged.

Automated Recovery Testing

Scheduled, evidence-producing tests, not annual fire drills. Testing cadence should match recovery commitment, and results should be documented automatically.

AI Workload Continuity Planning

A documented inventory of AI tools in use, the SaaS systems they connect to, the data they consume and produce, and how AI-dependent workflows will be restored. Most clients do not have this. Most MSPs are well positioned to build it.

How to Package This for Clients

The goal is not to sell every client every component. It is to give MSPs clear ways to monetize the work without overcomplicating the conversation.

A few practical service shapes work well:

A fixed-fee AI disaster recovery readiness assessment that inventories AI tools, reviews SaaS data protection, evaluates identity controls, examines RTO and RPO alignment, audits recovery testing history, and delivers a prioritized gap report. This is a strong fit for clients experimenting with copilots, AI agents, or workflow automation. It creates a natural follow-on engagement for remediation work.

A ransomware recovery readiness offering built around immutable backup storage, isolated recovery, clean restore point validation, identity recovery planning, and quarterly testing with executive reporting. This sells more easily than broad “cyber resilience” because it ties to a specific scenario clients already worry about.

A compliance-ready recovery service that bundles automated test evidence, recovery validation reports, written DR documentation, and access control review. This works for clients in healthcare, finance, legal, education, and government-adjacent verticals where audit and insurance demands are increasing.

An AI workload continuity add-on for clients running AI in production. This is the most differentiated offering on the list. Few MSPs are packaging it cleanly yet. The positioning is direct: if AI is becoming part of how the client operates, AI needs to become part of the continuity plan.

Each of these can be priced as a standalone engagement, bundled into existing managed services tiers, or used as an upgrade path from basic backup.

Make Recovery Easier to Prove With Cloud IBR

AI is changing what disaster recovery has to cover, but it does not change the fundamental MSP requirement: clients still need recovery they can test, prove, and deliver without unnecessary cost or complexity.

That is what Cloud IBR is built for.

Built for MSPs, Cloud IBR automates the recovery of Veeam backups into on-demand bare metal cloud infrastructure, making it easier to test disaster recovery plans, validate recoverability, and support stronger recovery commitments without the cost of always-on standby environments.

With Cloud IBR, MSPs can:

- Run automated disaster recovery tests on a scheduled basis

- Validate whether recovery objectives can actually be met

- Generate reports to support compliance, cyber insurance, and client accountability

- Recover from existing Veeam backups without replication-heavy overhead

- Avoid paying for idle recovery infrastructure between events

That makes it easier to deliver the kind of evidence-backed recovery service this article has been describing, without rebuilding the underlying backup architecture or maintaining infrastructure that sits idle most of the year.

The MSPs That Win Will Prove Recovery, Not Just Promise Backup

AI will not replace disaster recovery fundamentals. MSPs still need clean backups, tested restores, documented runbooks, protected identities, and clear recovery objectives.

But AI does change the stakes.

Attacks are moving faster. Extortion is becoming more targeted. Clients are adding AI into daily operations without always understanding the continuity risk. At the same time, AI gives MSPs new ways to automate validation, improve recovery planning, reduce manual work, and package higher-value resilience services.

The MSPs that win will not be the ones that simply claim to offer AI-powered DR. They will be the ones that can prove clean, fast, compliant recovery when it matters.

Sources:

See Cloud IBR In Action

Honestly, it’s faster to do than to explain!

-Alessandro Tinivelli of Revobyte

IT Consultant | Veeam Legend

Frequently Asked Questions

AI is changing DR in two ways. Attackers use AI to move faster, automate reconnaissance, and turn stolen data into targeted extortion. MSPs can use AI to detect risk earlier, validate backups, identify clean restore points, and automate testing and reporting. The combination means MSPs need to plan recovery for faster attacks while using AI to deliver more evidence-backed services.

AI workflows often depend on SaaS data, CRM and ticketing systems, internal knowledge bases, vector databases, prompts, model configurations, API integrations, and identity layers. If any of these break or get corrupted, the AI-enabled process may stop working even when core systems are intact. Most disaster recovery plans were designed before these dependencies existed.

Most MSPs use a combination of fixed-fee assessments, tiered managed service bundles, and add-on services. Common shapes include a paid AI continuity assessment, a ransomware recovery readiness offering, a compliance-focused DR package, and an AI workload add-on. Pricing is typically tied to user count, workload count, or business outcomes rather than device count.

No. On-demand recovery models let MSPs spin up infrastructure only when recovery or testing is needed, then shut it down afterward. That avoids paying for idle recovery environments and aligns recovery cost with actual use. Cloud IBR uses this model to recover Veeam backups onto bare metal cloud infrastructure on demand.

Testing cadence should match the recovery commitment and the rate of change in the environment. Critical workloads typically need more frequent restore validation than lower-tier systems. Annual testing is rarely sufficient when AI workflows, SaaS integrations, and identity systems are changing constantly. Automated testing makes more frequent validation operationally feasible.